Simulation and Inference for Stochastic Differential Equations : Review

Solving an SDE analytically can be done only in few instances(toy SDEs). For the majority of the cases, one solves it numerically. Having said that, this book can be read by anyone who is interested in understanding SDEs better. Simulation is a great way to understand many aspects of Stochastic processes. For example, you can read through Girsanov theorem for change of measure, but by visualizing it through a few sample paths, you have a deeper understanding . I have managed to go over only the chapters that deal with simulation and my summary would obviously comprise only those chapters,i.e. the first two chapters. I have postponed reading Chapter 3 that goes in to inference and Chapter 4 that comprises a set of advanced topics. May be I will find time to go over it in the future. For now, let me mention a few points from the book.

The first chapter is a crash course on stochastic processes and SDEs. It zips past through basic probability concepts and then introduces change of measure. To give a practical application of change of measure, the chapter mentions preferential sampling technique and gives an example to illustrate its utility. All the relevant terms that one comes across in the context of stochastic processes are defined such as Filtrations, Measurability with respect to a filtration, Quadratic variation etc. As expected, the chapter provides R code that simulates a Brownian motion, Geometric Brownian Motion, Brownian Bridge etc.

In fact the good thing about this book is that it comes with cran package “sde” that has functions to simulate different stochastic processes. So, once you understand the way it has been coded, you can always make use of the functions provided in the package, instead of coding everything from scratch. Ito integral is defined via the limit of Ito integral of simple bounded functions. This approximation procedure is explained very well in Oksendal’s book. However if one is not inclined to go over the math and wants to visually see this, one can always check out the code in this book where a sequence of Ito integrals of non anticipating functions converge in mean square to Ito integral of a general integrand. All said and done, visual understanding is good but not enough. The effort of going through the math is well worth it as you will know exactly why the whole thing works. The chapter also lists the important properties of Ito Integral.

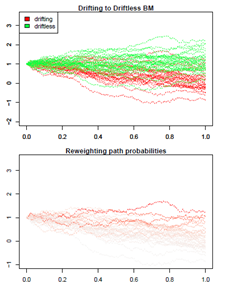

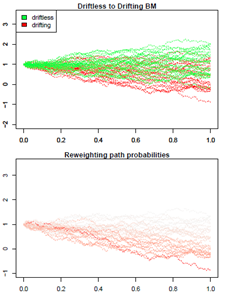

Diffusion processes are introduced and conditions for uniqueness and existence of the solutions are provided. These conditions are merely stated here and if one wants to understand the reasons behind those conditions, I will mention Oksendal’s book again that does a fantastic job of explaining the nuts and bolts. The thing I liked about this chapter is the visual illustration of Girsanov’s theorem. This is a theorem that helps change the drift of a Brownian motion. The code present in the book shows the changed likelihood of paths. I have tweaked a bit to illustrate two cases, change of measure that makes 1) a drifting Brownian motion to drift less Brownian motion and 2) a drift less Brownian motion to a drifting Brownian motion. Here is a sample of 30 sample paths and the respective path probabilities under change of measure.

[

[

The path probabilities that are darker imply higher weights to those paths. The visuals on the left hand side show that to make a drifting BM to a driftless BM, one has to weigh less the paths that are drifting. The visuals on the right show that, to make a driftless BM to drifting BM, one has to weight more the paths that tend to drift. This is exactly done by Radon Nikodym derivative.

The chapter ends by stating the following list of models/sdes and computing their moments, conditional density, conditional expectation and conditional variance(wherever possible)

Ornstein-Uhlenbeck or Vasicek process

Geometric Brownian motion model

Cox-Ingersoll-Ross model

Chan-Karolyi-Longstaff-Sanders (CKLS) family of models

Hyperbolic processes

Nonlinear mean reversion Sahalia model

Double-well potential model

Jacobi diffusion process

Ahn and Gao model

Radial Ornstein-Uhlenbeck process

Pearson diffusions

The stochastic cusp catastrophe model

Generalized inverse gaussian diffusions

The second chapter starts with two most popular techniques to solve SDEs, first is the Euler approximation and second is the Milstein scheme. Lamperti transform is introduced to show that Euler approximation on Lamperti transform of SDE is equivalent to Milstein scheme. Lamperti transform is explained via applying it to GBM , CIR process, that results in a simplified SDE. The workhorse of the chapter as well as the book is the function sde.sim().

The following can be accomplished using the above function

Processes that can be simulated: OU process, GBM , Cox-Ingersoll-Ross process, Vasicek process

Simulation method: Euler, KPS, Milstein, Milstein2, Condition density , Exact Algorithm, ozaki, and shoji

Law : One can simulate from the conditional law or the stationary law for the above 4 processes

Exact Algorithm: A very powerful algorithm that uses a biased Brownian motion and change of measure principles to simulate a solution for the SDE. The algorithm uses hitting time of Poisson process for simulating the SDE.

The chapter also gives a detailed example that shows the performance of Milstein vs. Euler approximation method. Even though simulating from the transition density is possible in only few cases, the chapter suggests that wherever possible it should be preferred over other simulation methods. There is a discussion of local linearization methods that takes of relaxes some assumptions of Euler/Milstein schemes, i.e. the drift and diffusion coefficient are not assumed constant in the partitioned time interval.

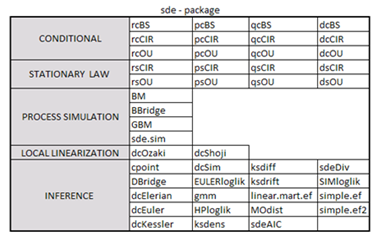

In the “sde” package, there are about 45 functions that one can use for SDE simulation and inference. I have tried categorizing the functions below (based on my limited understanding):